LakeSoul Cloud-Native Lakehouse

Leading technical concept and architecture design

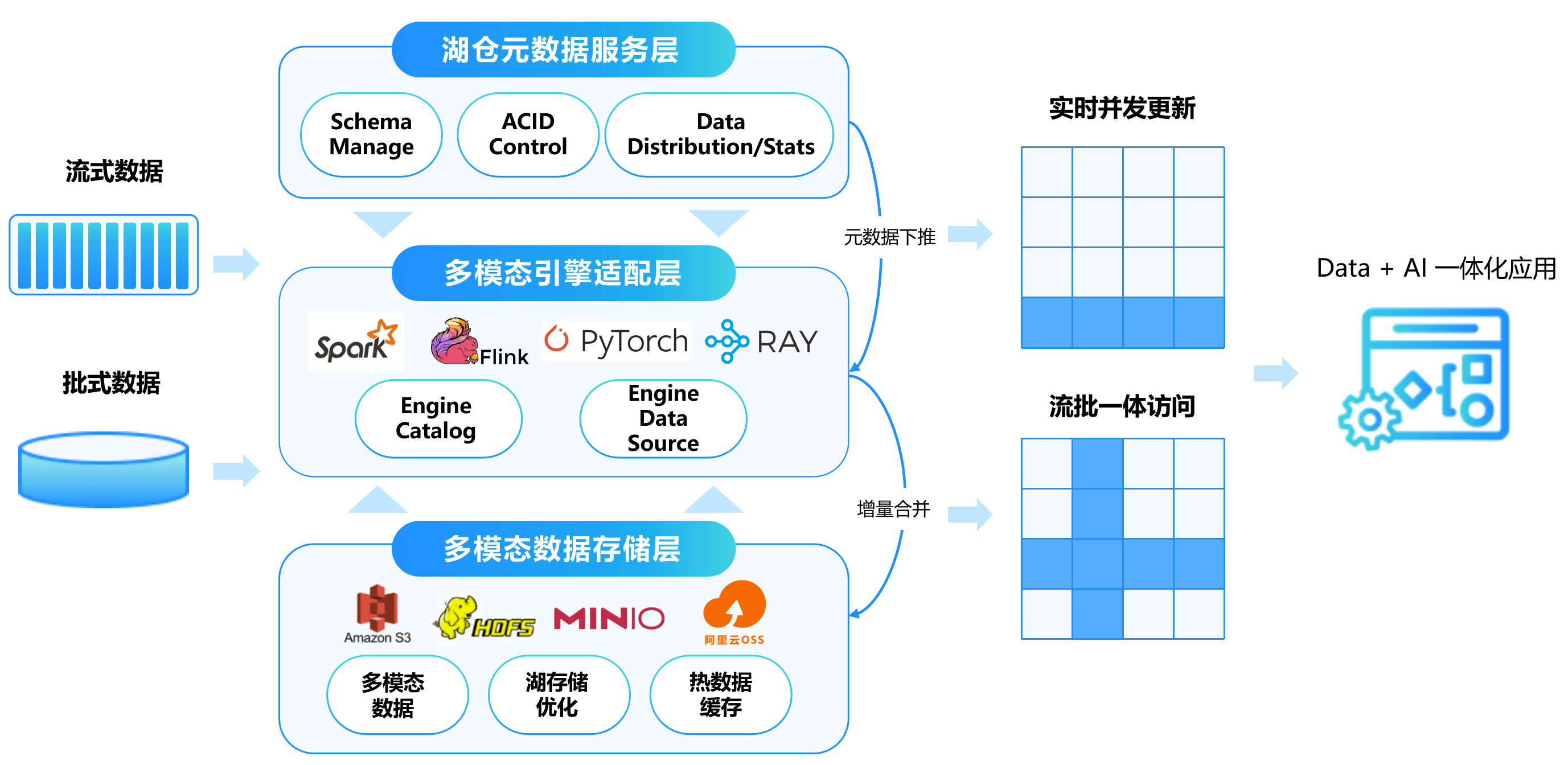

Traditional data architecture is faced with the untimely response, high cost, inability to unify real-time data, batch data, and difficulty scaling. LakeSoul provides a perfect lake warehouse storage to solve the above problems. It offers high concurrency, high throughput, read and write capabilities and complete warehouse management capabilities on the cloud and provides it to various computing engines in a general way.

Efficient and extensible Catalog metadata service

Concurrent writes and ACID transactions

Incremental writes and Upsert updates are supported

Real-time Lakehouse

Multi modal fusion retrieval

Open

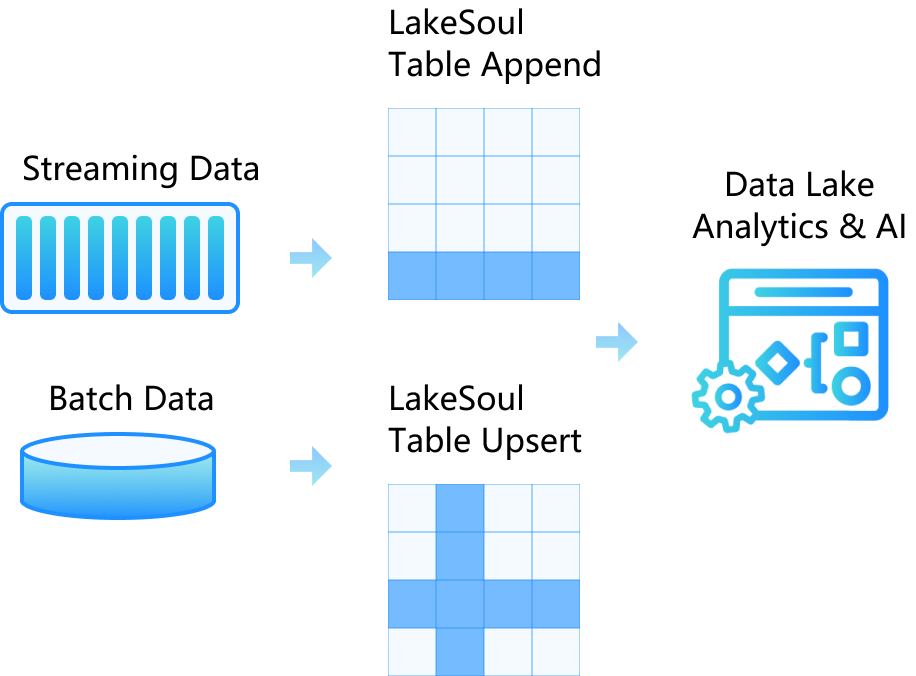

Unified stream-batch table storage

Rich application scenarios, meeting various service requirements and helping to release service value

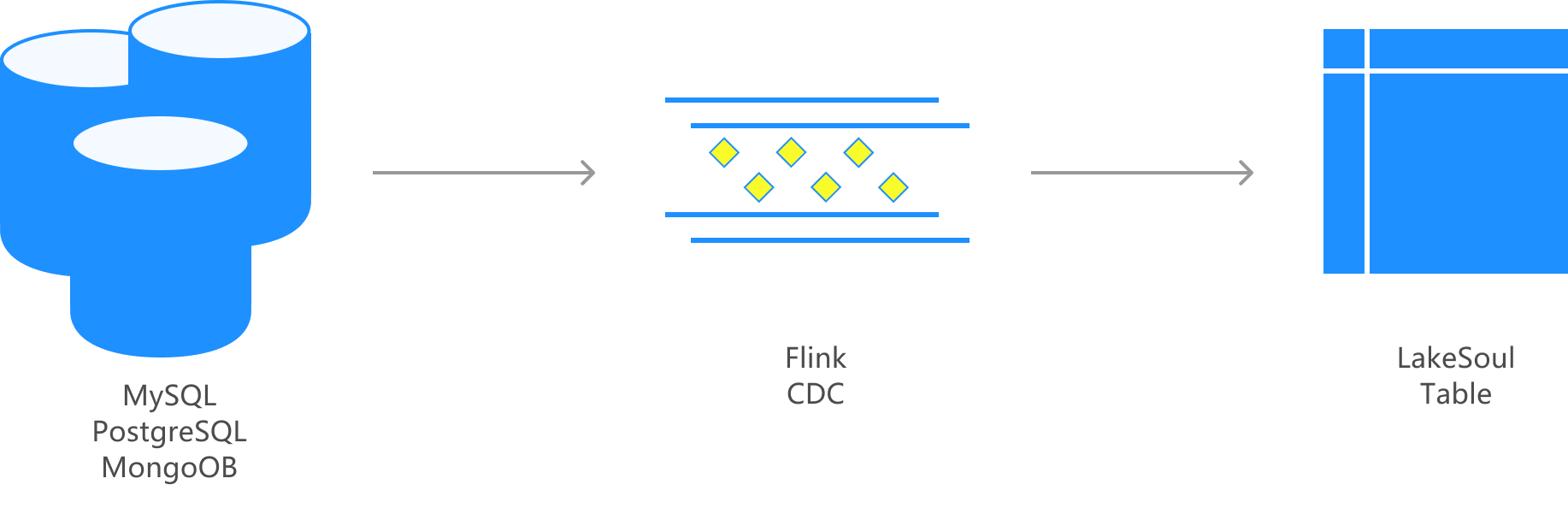

Real-time data is rapidly entering the lake

Flink CDC is provided for real-time implementation from the data source without T+1 import and Kafka deployment

Example of real-time online database entry report analysis

With only relevant configurations, such as online data sources, the whole database synchronization and real-time entry task can be started. It supports the automatic sensing of new tables and synchronizing table structure changes without human operation and maintenance. The online data is updated to the lake warehouse in real time. The BI reports and large-screen display are seamlessly connected and updated in real time so that key business indicators can be grasped at any time to support business decisions.

Real-time Report Analysis

Based on the streaming batch update feature, data extraction, transformation and development are completed through SQL, simplifying the ETL and data analysis process.

RAG Intelligent Expert System

LakeSoul provides a native Python interface that integrates support for mainstream AI frameworks such as PyTorch for direct data calling, training, and inference. It can also support training of large models and RAG applications

AI 专家咨询

Join the community and share data intelligence